With advanced large language models like ChatGPT or Gemini, the line between human and machine writing is more blurred than ever.

So, how can you tell if a piece of content was generated by AI, and how reliable is this detection?

In this article, we’ll explore the signs that can help you identify AI content, and their reliability.

Is ChatGPT Writing Detectable?

Yes, there are certain indicators that might suggest content is ChatGPT-generated, but determining this with complete certainty can be tricky.

In fact, studies have shown that even trained educators can sometimes misjudge whether student work is AI-generated or not. This proves that while there are clues, the human ability to detect AI writing is far from perfect.

One of the biggest challenges is that people can easily edit ChatGPT’s output to make it less detectable. A few changes to phrasing, tone, or structure can make AI-generated content feel more human, blending it seamlessly with other types of written work.

Another complicating factor is the variability in how ChatGPT responds to different prompts. Depending on the input, the AI can generate content that ranges from highly generic to surprisingly nuanced, which makes it challenging to set consistent rules for detection.

Ultimately, while it’s possible to make educated guesses about whether content is AI-generated, you can never be 100% certain. There are tools available to help with detection, but even these have limitations.

Signs of ChatGPT-Generated Writing

Even though detecting ChatGPT-generated content is difficult, there are still some signs that can make it more likely a piece of writing was produced by AI:

1) Predictable AI Structures

Even when the wording changes, the underlying scaffolding often stays the same. Once you train your eye, you’ll spot it in seconds.

Repetitive sentence length and rhythm

Sentences tend to be similar in length and complexity. .

Read a section out loud. Do you notice a steady, almost metronomic rhythm?

Do sentences stack neatly one after the other, without any short interruptions or long, messy detours?

Human writers don’t do that consistently. You pause. You rush. You emphasize. You break your own flow when you think of something mid-sentence. AI rarely does this.

Paragraphs built from identical molds

Another strong signal is paragraph uniformity.

AI paragraphs often follow the same internal recipe:

- Topic sentence that restates the heading

- Explanation in neutral terms

- Supporting sentence that adds little new information

- Soft concluding sentence that transitions to the next idea

Now repeat that structure five times in a row.

Nothing is wrong with any single paragraph. The problem is accumulation. Human writing varies more because humans don’t think in clean blocks. We explore, digress, double back, or zoom in unexpectedly.

Overuse of em dashes

AI loves:

- Em dashes used for emphasis — like this — repeatedly

- Commas placed very carefully

- Parenthetical clarifications that feel unnecessary

Again, one em dash means nothing. Ten em dashes used in the same rhetorical way across one article is different.

Repeated conclusions and “double wrapping”

One of the clearest structural tells appears at the end.

AI frequently:

- Concludes the article

- Then summarizes the conclusion

- Then adds “key takeaways” that restate both

You’ll see phrases like:

- “In conclusion…”

- “To sum up…”

- “Ultimately…”

- Followed by bullet points that say the same thing again

This happens because AI is trained to be helpful and safe. It wants to make sure you didn’t miss anything. Humans usually stop once the point is made.

Decorative analogies

AI uses analogies a lot. Too much.

Especially analogies that:

- Explain something already simple

- Don’t add new insight

- Could be removed without changing the meaning

For example, comparing content strategy to “building a house” or “navigating a landscape” without extending the analogy in a useful way.

2) Repetitive AI Formulations

If you read a lot of AI-assisted content, you’ll start noticing the same phrasing reappearing under different topics.

“Not only… but also…”

One of the most common AI formulations is symmetrical construction.

Examples you’ll see often:

- “Not only does X improve Y, but it also enhances Z.”

- “Whether you’re a beginner or an experienced professional…”

- “Both A and B play a crucial role in…”

These constructions sound polished. That’s the problem.

Humans use them occasionally, usually for emphasis. AI uses them as defaults because they’re safe and grammatically stable. When you see them repeated multiple times in one article, it’s rarely a coincidence.

Tricolons

AI loves lists of three.

You’ll see phrases like:

- “Clear, concise, and effective”

- “Faster, smarter, and more scalable”

- “Accurate, reliable, and actionable”

Again, there’s nothing inherently wrong here. The issue is density.

Excessive signposting and “guiding” language

AI is very polite. Too polite.

It constantly tells you what it’s about to do:

- “Let’s take a closer look…”

- “Let’s break this down…”

- “Now, let’s explore…”

- “Here’s why this matters…”

This kind of language is useful in moderation. But AI overuses it because it’s trained to be explicit and instructional.

Balanced sentences that avoid commitment

Another giveaway is excessive balance.

AI tends to hedge:

- “While X has its advantages, it also comes with challenges.”

- “This approach can be effective in some cases, but not in others.”

- “There is no one-size-fits-all solution.”

These sentences are technically true. They’re also empty if they’re not followed by a clear stance.

Humans usually have opinions, even cautious ones. We say what we would do. We say what we wouldn’t do. AI prefers neutrality because neutrality is safer.

3. Typical AI Vocabulary

Vocabulary is often where people start when trying to detect AI writing.

The issue isn’t that AI uses bad words. It uses default words. Safe, flexible, context-agnostic language that works almost anywhere.

“Bullshit” words

AI is trained on massive corpora. As a result, it favors vocabulary that travels well across industries, topics, and tones.

Words like:

- Game changer

- Crucial

- Transformative

- Innovative

- Powerful

These words sound impressive, but they rarely force the writer to commit to anything concrete.

Stock phrases

Some phrases appear so often in AI writing that they’ve become near-tells.

Examples you should treat with suspicion:

- “In today’s fast-paced world…”

- “It’s important to note that…”

- “Let’s face it…”

- “Imagine a scenario where…”

- “At the end of the day…”

Abstract vocabulary

AI loves abstract places:

- The landscape

- The realm

- The ecosystem

- The space

These words allow the model to talk around a topic without grounding it.

For example:

“In the evolving content marketing landscape…”

That sounds fine, but it avoids specifics. Which part of content marketing? B2B? SEO blogs? Product documentation? Agency workflows?

Polished but emotionally neutral language

Another vocabulary signal is emotional flatness.

AI avoids:

- Mild frustration

- Doubt

- Friction

- Regret

- Strong preference

Instead, it uses polite, agreeable wording:

- Effective

- Helpful

- Valuable

- Useful

- Worth considering

This creates text that feels inoffensive but distant.

4) Lack of opinions and lived experience

AI-generated content is usually accurate in a broad sense. The problem is that it stays safely above reality.

You’ll see statements like:

- “It’s important to focus on quality content.”

- “Consistency plays a key role in success.”

- “Understanding your audience is essential.”

All of these are true. None of them help you make a decision.

Another strong signal is grammatical distance.

AI often avoids:

- “I tried…”

- “We learned the hard way…”

- “If you do X, you’ll run into Y.”

Instead, it relies on passive or generalized constructions:

- “It can be beneficial to…”

- “Businesses should consider…”

- “One approach is to…”

AI includes examples, but they’re often illustrative rather than real.

“Imagine a company that implements AI tools and sees improved efficiency.”

Are AI Detectors Reliable?

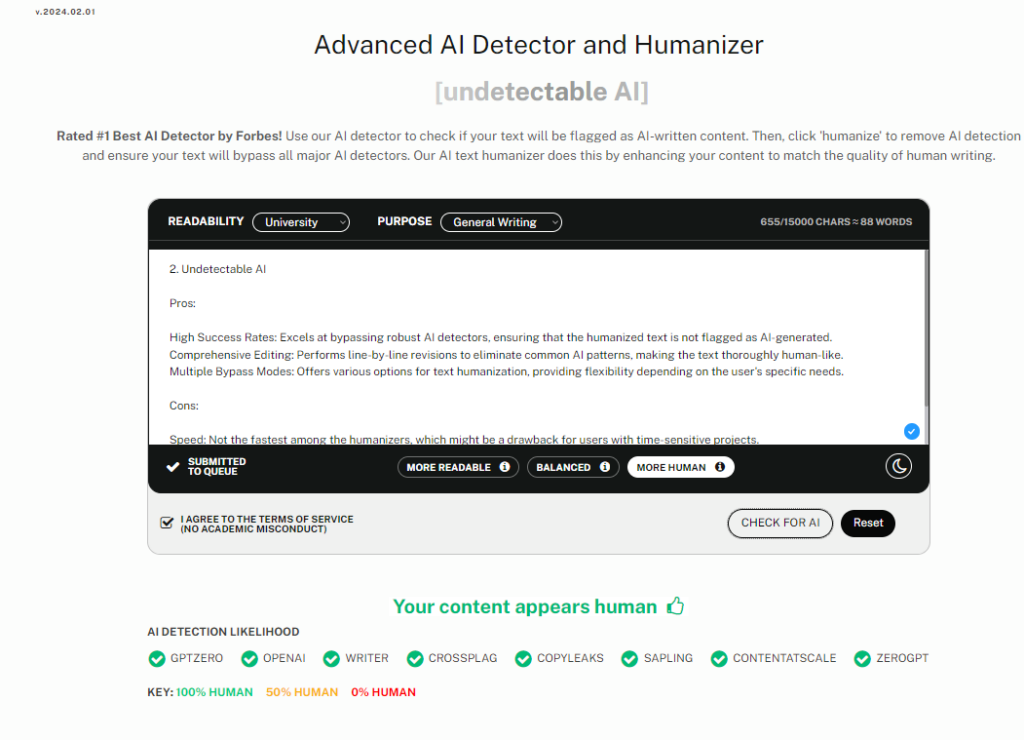

When it comes to identifying AI-generated content, AI detectors are one of the main tools available. However, relying solely on tools like Originality.AI, CopyLeaks …etc to verify the authenticity of content has its limitations :

- These detectors often struggle with accuracy when they encounter content that has been deliberately modified to evade detection. In some cases, accuracy rates for detecting AI-generated content have dropped as low as 17.4%, which is far from reliable.

- Another significant issue with AI detectors is their tendency to display bias. Research has demonstrated that content written by non-native English speakers is more likely to be flagged as AI-generated, even when it’s completely human-written. This bias can lead to unfair judgments, especially in academic or professional settings.

- AI detectors are also often inconsistent in their judgments. Sometimes, these tools give an “uncertain” response, indicating they can’t definitively say whether the content is human or AI-generated. This inconsistency leads to both false positives and false negatives, making the tools less trustworthy.

- AI detectors also lose signficant accuracy with each new LLM model releases. The rapid advancement of large language models means that detection methods must constantly adapt to new versions. For example, there’s a noticeable difference in detection accuracy between older models like GPT-3.5 and more advanced ones like GPT-4o or Claude 3.5.

- Moreover, more formal and structured writing styles are more likely to be flagged as AI-generated. This is why some detection tools, such as Originality.AI, advise users to focus on more informal writing styles to avoid false flags.